AI Decisioning Agent Builder

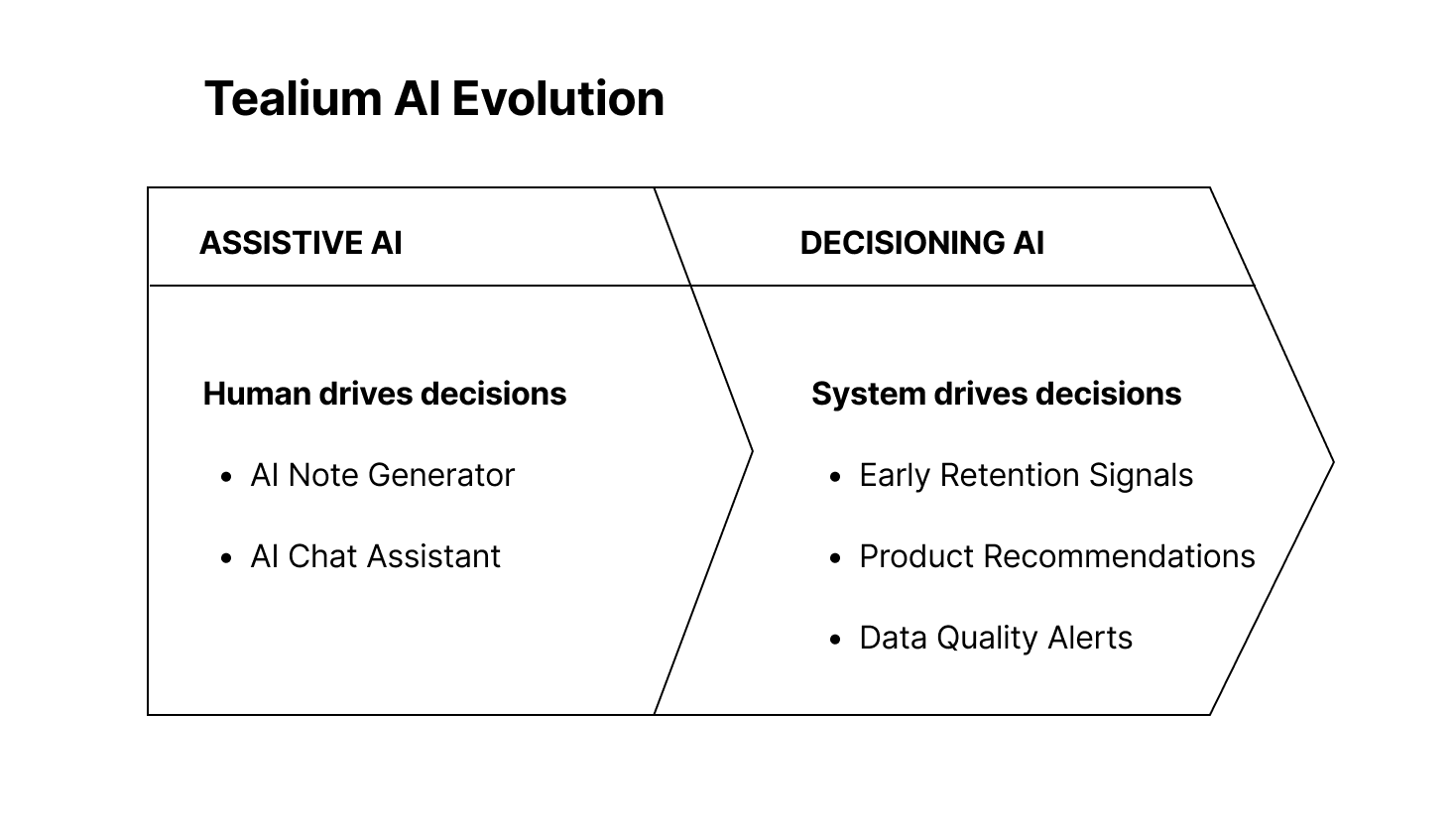

Evolving from Assistive AI to Autonomous Decisioning

Overview

Tealium is an enterprise Customer Data Platform that helps companies collect, unify, transform and activate customer data in real time to power personalized marketing and customer experiences across channels.

I led the evolution of Tealium’s AI capabilities, first designing AI Note Generator and AI Chat Assistant, then defining and launching the company’s first AI Decisioning Agent framework.

This shifted the platform from assistive AI to governed, predictive automation embedded directly into audience creation and activation workflows.

Impact

Platform

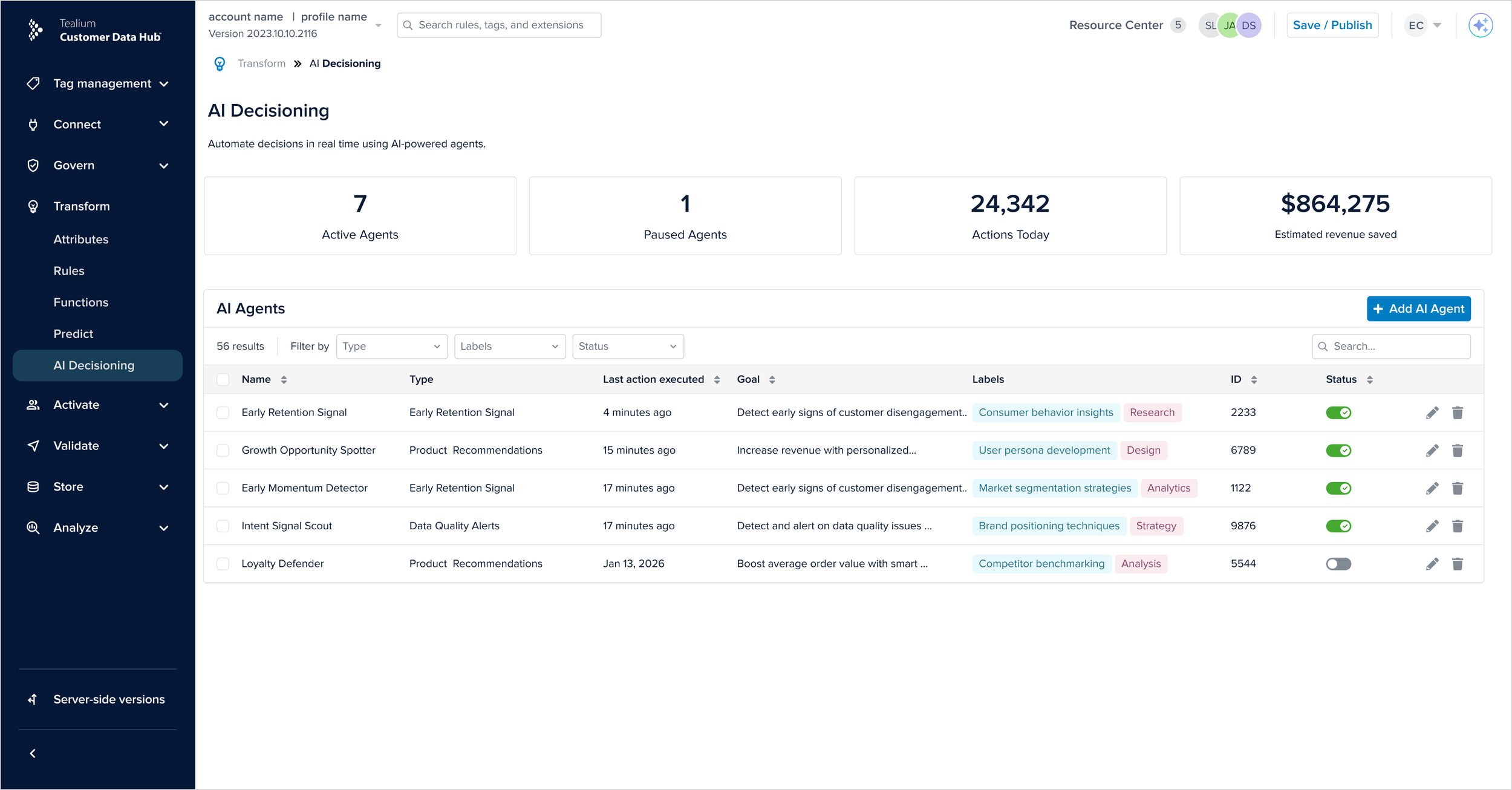

Introduced Tealium’s first AI decisioning capability

Established scalable agent framework for predictive automation

Established reusable agent framework

Built governance model for automated AI workflows

Defined governance lifecycle reused across AI initiatives

Business

Directly helped secure the company’s largest deal of the year, a $10M+ enterprise win with Europe’s third largest bank, Crédit Agricole Group, beating Adobe.

Strengthened AI positioning in competitive enterprise sales cycles

Contributed to Tealium’s recognition as a Leader in the 2025 Gartner Magic Quadrant for CDPs

Advanced the platform from AI assisted workflows to an intelligent decisioning infrastructure.

Problem

Assistive AI improves productivity.

Decisioning AI influences customer outcomes.

Before this initiative:

AI did not create audiences

AI did not trigger connectors

AI did not automate lifecycle decisions

No governance model existed for automated activation

Introducing AI into activation workflows raised enterprise-level risks:

Who owns the decision?

What is editable vs system-managed?

How do we prevent over-automation?

How do we make invisible processing trustworthy?

The challenge was not introducing AI.

It was designing accountable AI.

My Role & Contribution

Foundation for Assistive AI

Designed:

AI Note Generator

Established:

AI interaction patterns

Transparency standards

Trust guidelines

Decisioning AI Leadership

I led:

UX Strategy

Defined decisioning AI as a new product category

Positioned automation as structured infrastructure

Proposed governance-first lifecycle (Draft → Review → Published)

Framed Early Retention Signals as the initial release use case

Structured roadmap between MVP and later phases

System & Interaction Design

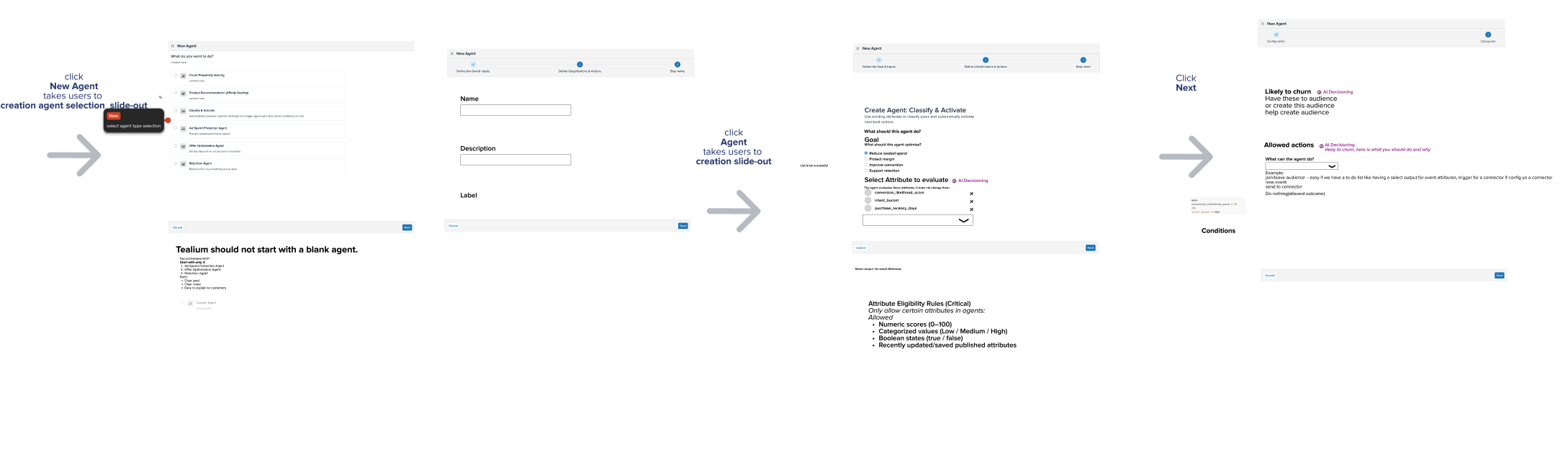

Designed the end-to-end agent builder flow

Defined editable vs system-managed boundaries

Structured inspectable inputs for trust

Designed system-generated output architecture

Introduced performance insight layer to measure impact

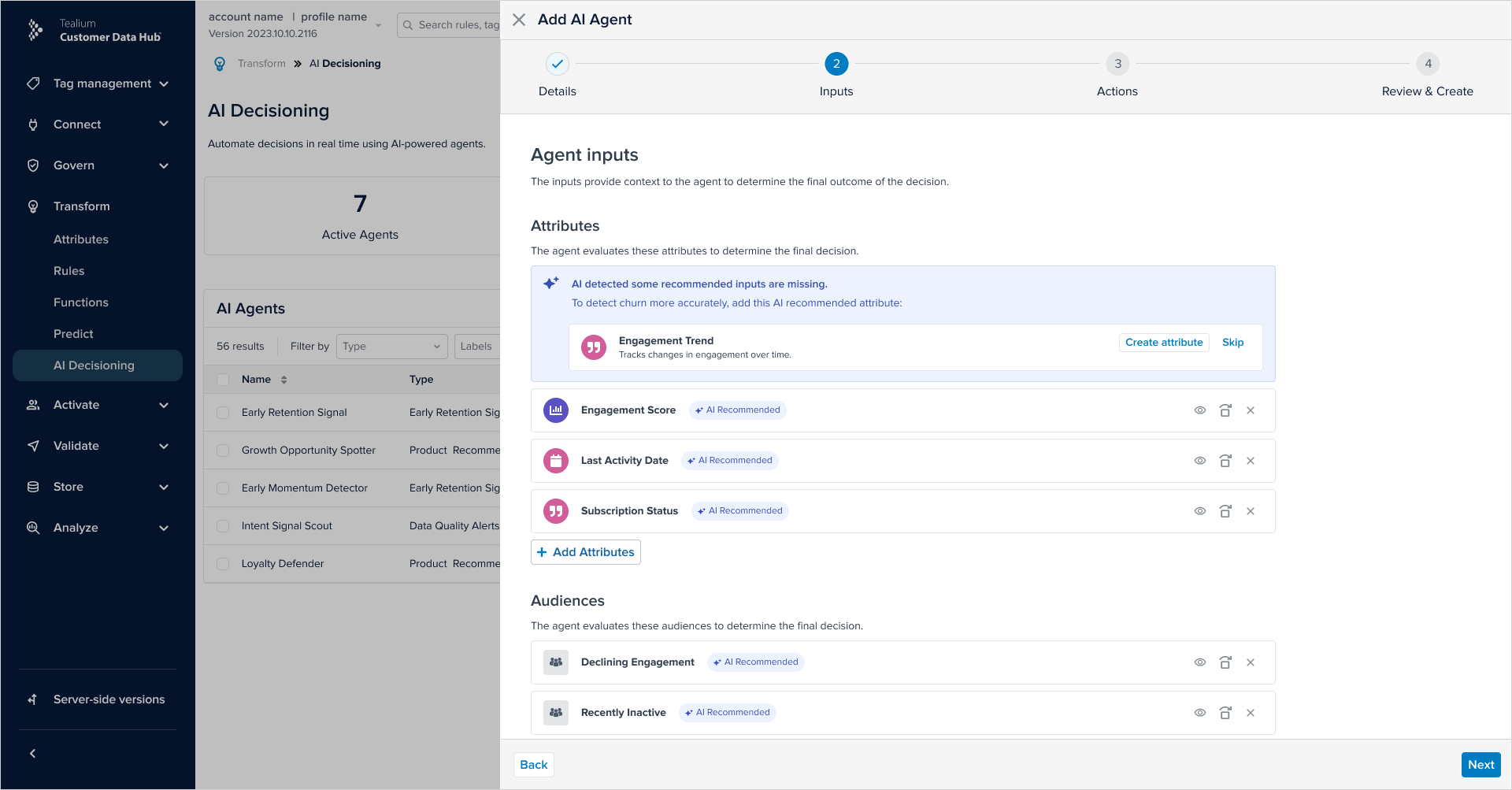

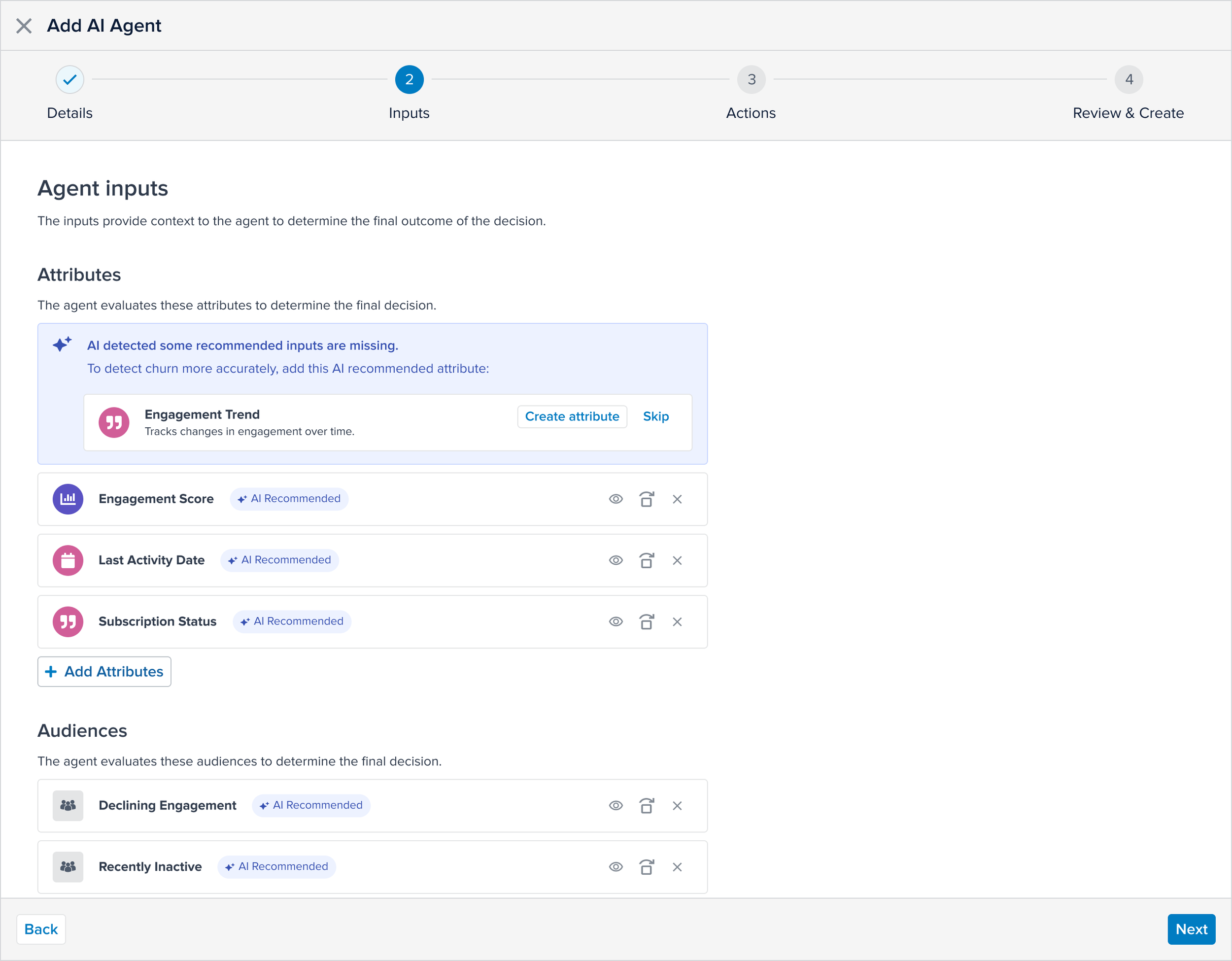

Designed AI-detected missing attribute guidance

Partnered with engineering on async architecture alignment

Cross-Functional Alignment

Partnered with Product to define automation scope

Worked with Engineering on architecture implications

Partnered with Customer Success to validate churn pain

Hosted Early Product Workshop with AI/ML experts and domain leaders

Aligned Activation and Audience teams for safe integration

This introduced a new AI category into the platform.

Validation Strategy

Partnered closely with PM and Customer Success and spoke directly with enterprise customers, including:

Country Road Group

Abercrombie & Fitch

AstraZeneca

ASICS

Sapient

EMF

Liberty Mutual

Across conversations and renewal cycles, we identified:

Repeated manual churn segmentation workflows

Duplicated lifecycle rules across teams

Hesitation toward black-box automation

Insight:

Enterprise customers wanted automation — but with visibility and control.

Trade-Off Decisions

Automation vs Control

No auto-publish

Required Review state before activation

Transparency vs Cognitive Load

Exposed model inputs clearly

Hid background event attribute creation

Surfaced system-generated artifacts in summary

Flexibility vs Model Integrity

Inputs editable

System-generated audiences locked

Speed vs Governance

Avoided one-click automation

Required structured configuration before publishing

Each decision protected enterprise trust.

End-to-End Agent Flow

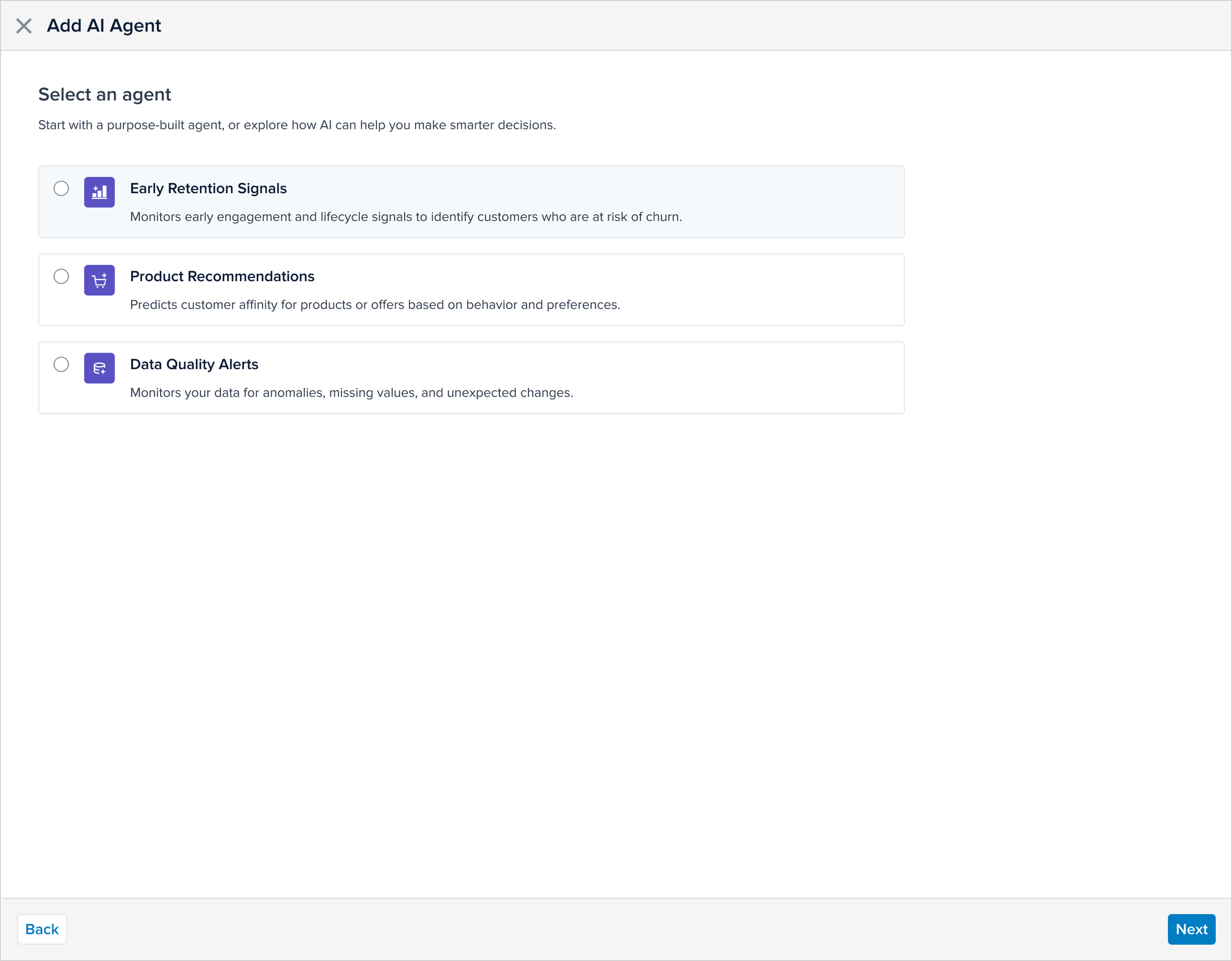

Step 1 — Select Decision Objective

Initial release: Early Retention Signals (Churn Detection)

Step 2 — Review & Configure Inputs

Preloaded attributes surfaced

Users can replace or add inputs

Model inputs visible and inspectable

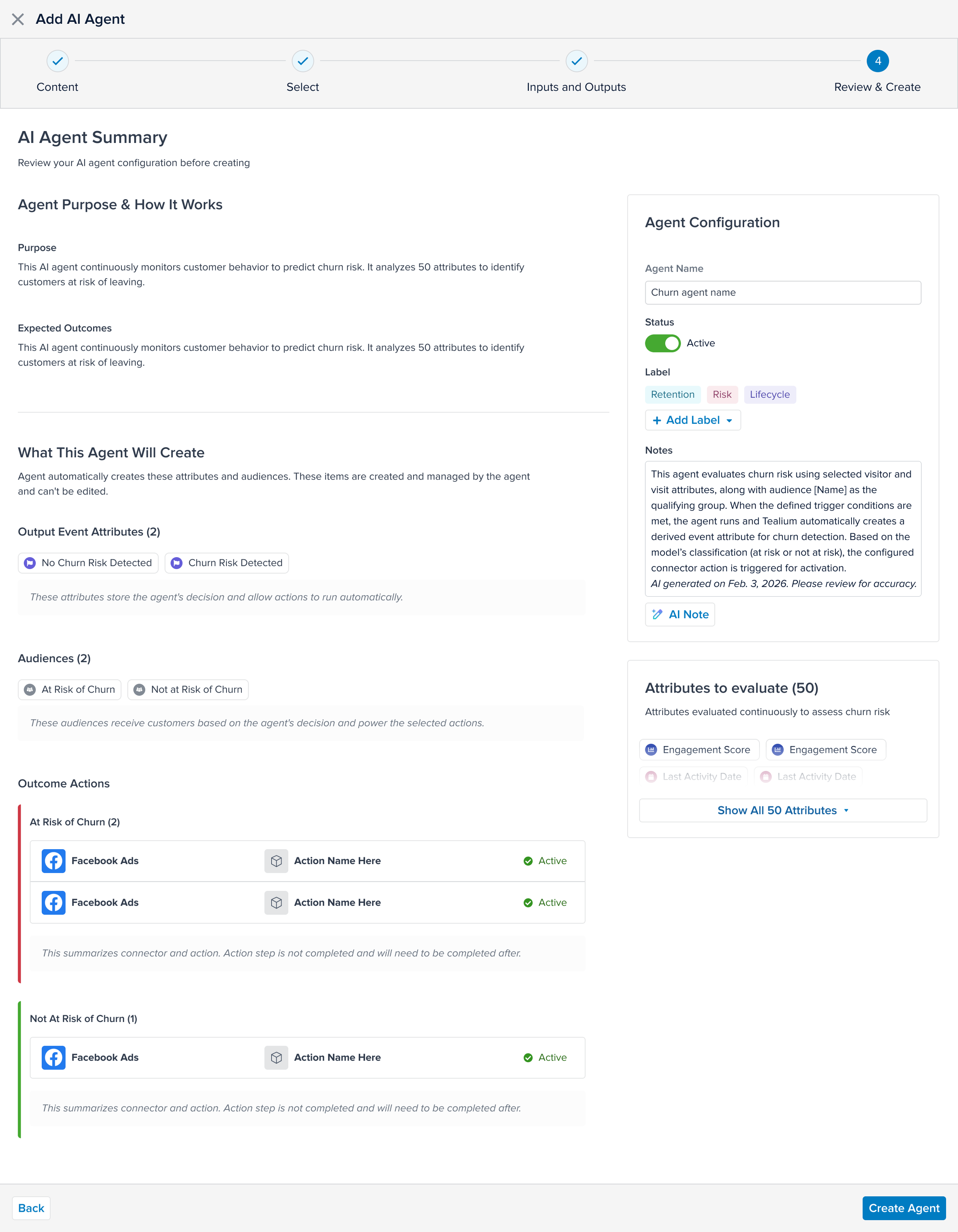

Step 3 — System-Generated Outputs

The system automatically generates:

At-risk audience

Not-at-risk audience

Supporting output attributes

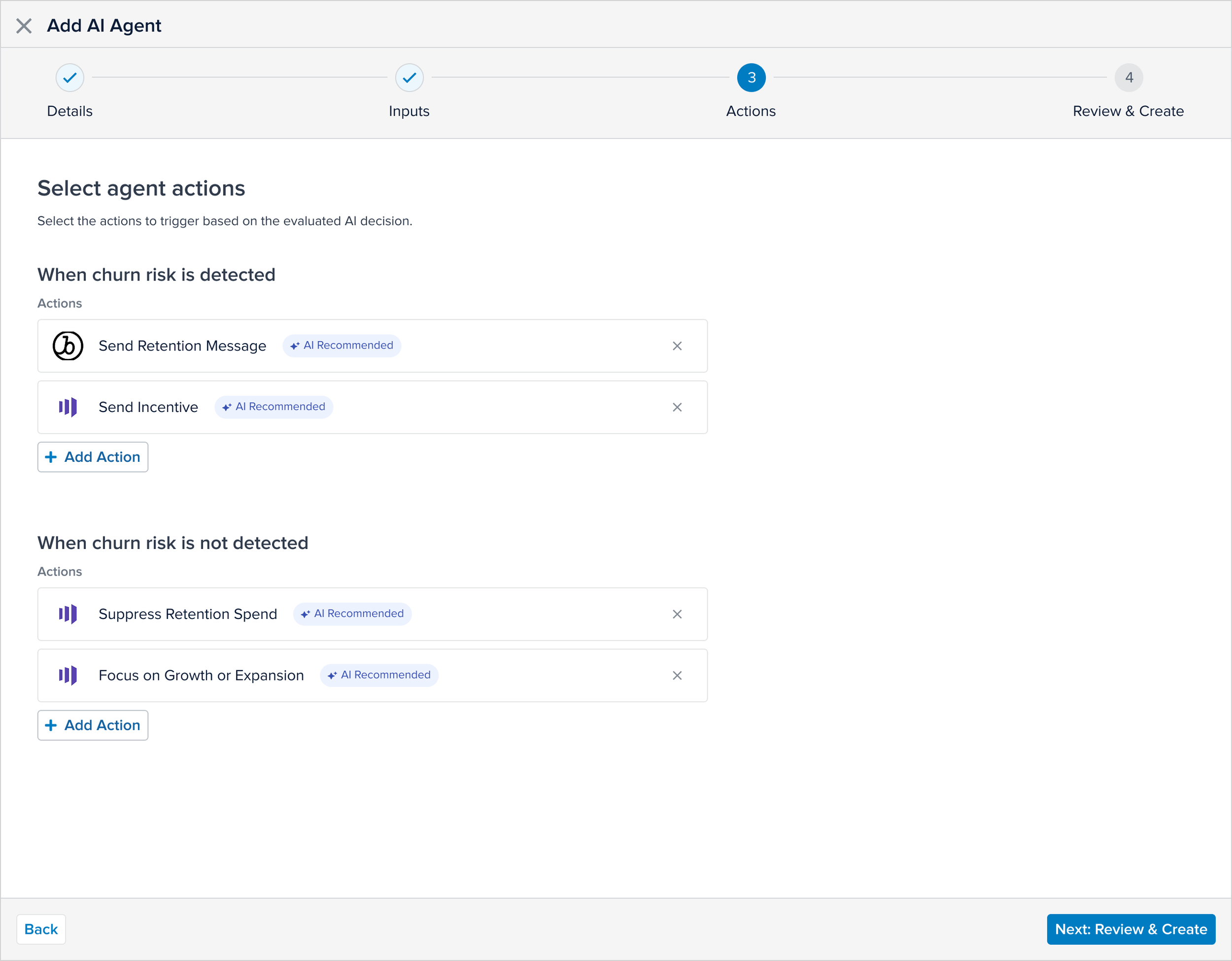

Step 4 — Activation Configuration

Audience → Connector → Action

AI influences activation but does not hide it.

Human oversight remains intact.

Step 5 — Lifecycle Governance

Draft → Configured → Review Needed → Published

AI is treated as a managed entity, not a toggle.

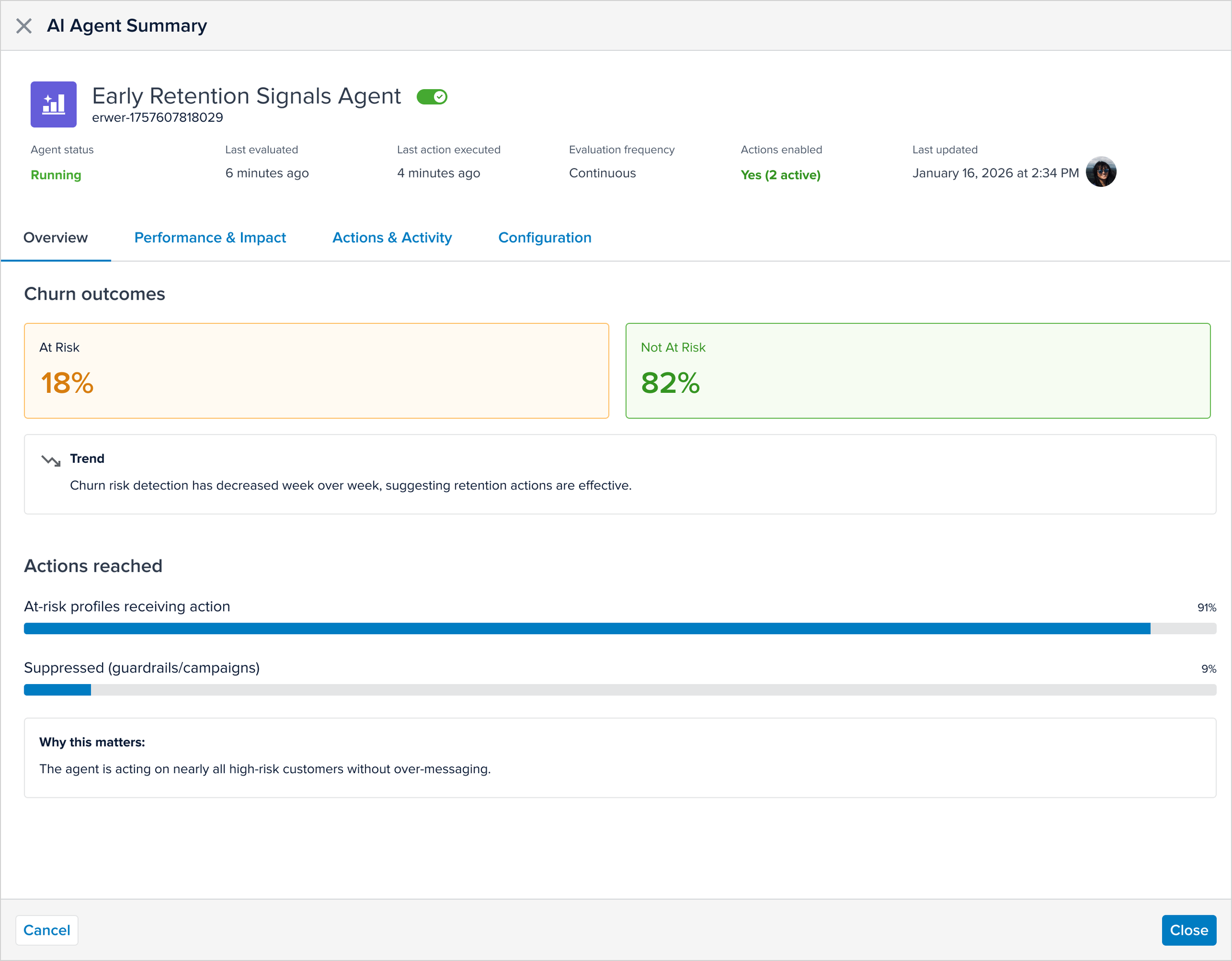

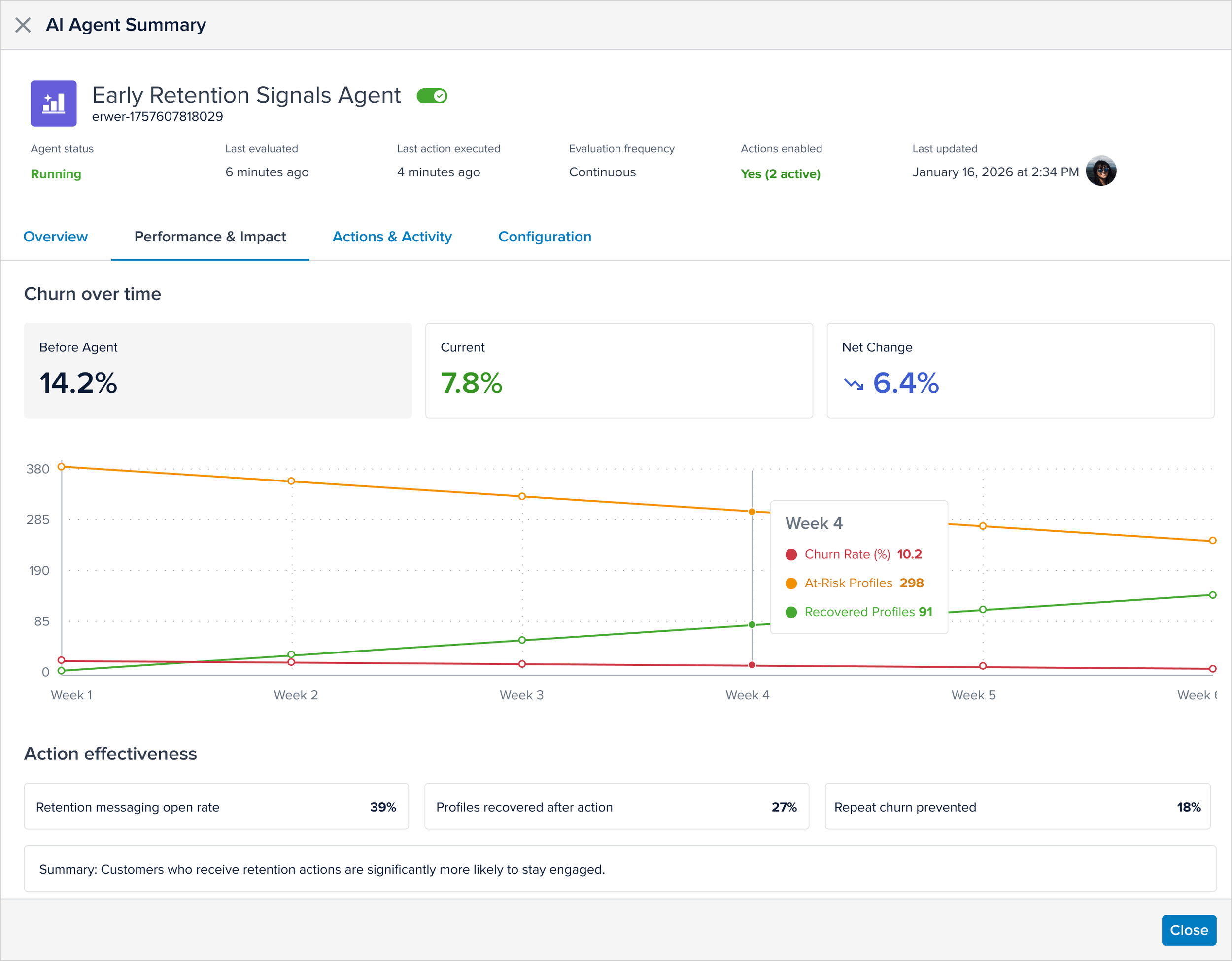

Step 6 — AI Summary

A summary slide-out appears after agent creation as a quick decision checkpoint

Explains in plain language what’s happening, summarizing insights so users understand, not just raw data

Shows expected impact and updates performance as real data comes in, with controls to adjust strategy

This matters because users can quickly grasp and monitor results in one place

Phased Delivery Strategy

To balance speed, governance, and technical feasibility, I collaborated with the Scrum team (Product and Engineering) to define a staged rollout.

Rather than delay launch for full analytics infrastructure, we sequenced core decisioning first, followed by advanced performance insights.

Phase 1 — MVP

Agent creation flow

Inspectable inputs

System-generated audiences

Governance lifecycle (Draft → Review → Publish)

Inline missing attribute detection

Initial performance visibility

Goal: Introduce accountable decisioning safely and quickly.

Phase 2 — Advanced Insights

Before vs after churn comparison

Ongoing agent performance tracking

Optimization recommendations

Expanded explainability

Required additional backend investment, so it followed the MVP release.

Solution Validation

Early Product Workshop

Cross-functional pressure test with Product, Engineering, AI/ML, and Customer Success to validate governance boundaries and automation risk.

Usability Testing (UserTesting.com)

Ran moderated and unmoderated studies to assess:

Clarity of what the agent will do before publish

Understanding of editable vs system-managed logic

Confidence configuring inputs and activation

Whether lifecycle states (Draft/Review/Published) reduced automation anxiety

Insights informed guardrails, labeling, and summary clarity before rollout.

Direct User Feedback

Validated input transparency, trust in generated audiences, and activation oversight expectations.

Internal Release

Refined lifecycle states, summary explanations, and background artifact communication.

Early External Release

Monitored configuration completion, activation adoption, and Customer Success hesitation signals.

What I Would Improve

Confidence scoring surfaced in UI

Add explainability summaries

Improve Agent performance dashboards

Optimization recommendations over time

Automation is phase one. Adaptive AI is phase two.